So I've got this large dataset.call it tData, it's pretty easy to cut it up with a where condition in LINQ, but I want to be able to interrogate another dataset.call it qCategory (which contains the names of specific fields in tData) and return fields from tData which are listed in qCategory.My somewhat misguided attempt so far.I loop through the field names in tData (it's Enumerable so foreach works, same with qCategory), and where ever it matches an entry in qCategory, I build a string. Var query = tData.Where.Select(k = new strFieldNames );This query doesn't fail.LINQ just thinks that strFieldNames is one new fieldname. If I don't use the new keyword, it just fails to compile.Inserting strings doesn't work obviously.is there another way I could iteratively build a field list that would be acceptable in LINQ?

I don't understand what you mean. Have you tried my solution (based on your example)? I've created.net fiddle: here.

Or is there another way altogether?This has to be a common problem.I'm sure someone has solved it.I'm obviously approaching this from a VB background where building strings and submitting them to a SQL engine was old hat.but with LINQ/C# things are a bit different. I'm intrigued by LINQ because it allows be to work on Datasets (in memory databases) with ease, and I'm excited about the potential performance benefits to some apps I've written. It sounds like you're describing a Join operation but I'm not sure. Can you clarify what you're actually trying to do? My guess is you don't actually care about strFieldNames.It would have been a trivial join if qCategory had in columns what it actually holds in rows. (qCategory has one column which lists Categories in rows).I want to select those Categories as fields from tData.

If there was an easy way to transpose qCategory.I guess a join would work. I guess that's one way to do it.

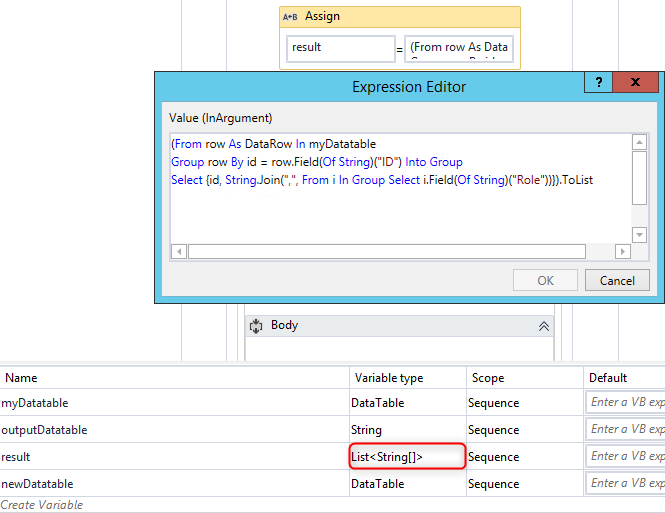

Although that join would only retrieve some of the fields I'd need (I also want certain fixed fields, and the count fields associated with each Category.What's interesting is that I haven't been able to find out what the data type of a query expression is. In most usages, I see it typed as a var (which basically lets the compiler pick the type), but I've seen 1-2 instances where it's been typed as an int32. Obviously, it's not a string type, because mixing it with a string would be easy then.My thinking is that if I knew the correct type, maybe I could iteratively construct a list in a compatible data type and not have these issues.glenthas is right as to my intent though. I want to be able to dynamically choose which fields get shown.

This was trivial to do when I was dealing with SQL strings.it's less than that with LINQ. StrQueryFields = 'FILTER,COLLAPSEDIDENTIFIER,RESPONSETYPE,TYPE,COUNT,' + //starting fixed fields'Communication,CommunicationN,Your Manager,Your ManagerN,' + //Category fields and Counts'Compensation and Benefits,Compensation and BenefitsN,Learning and Development,' +'Learning and DevelopmentN,Your Work and Job,Your Work and JobN,Values,ValuesN,' +'Fairness and Diversity,Fairness and DiversityN,Leadership,LeadershipN,Teamwork,TeamworkN,' +'Strategy,StrategyN,Clients,ClientsN'With the dynamic LINQ though.I could no longer use a Dataset since it is not IQueryable. I had to switch to a DataContext.which waswas a PITA.basically had to setup a SQL Server version of my Access DB, and then map it as a LINQ to SQL class.Anyways.hours wasted later, I set this up:Dynamic LINQ Query expression. Access DBAh sorry, no idea if it plays nice on that.As to the selected fields you have the problem of using a compile time type definition (var) but with runtime columns.

That's never going to work.You can do this with dynamic code gen (though that could be a bitch) essentially generating the data model class on the fly with the relevant column names and types.You can do the construction of the query columns on the fly, you just can't have the result mapped to a strongly defined type unless you happen to know the full set of columns/types up front and can deal with the unset properties in some clean way so as to hide them from whatever subsequent operations/display you are doing on them. Access works fine with LINQ as long as you stick with Datasets (via the appropriate table adapter and OLEDB provider). AFAIK, there is no way to get Access into an DataContext though. Basically you can use LINQ to Datasets to your heart's content with Access as the source DB/data provider. The problem with Datasets though is that they are not of the IQueryable type (and so LINQ to SQL obviously does not work).The problem with Dynamic LINQ is that it depends on LINQ to SQL and the query data source must be of the IQuerable type.

Not sure I fully understand this explanation. Not sure I fully understand this explanation. That is interesting code you have up there. It looks good, I will give it a try. Basically it's a MAKE TABLE query, always a convenient workaround when strung together queries got too complex.you'd just make your results into a table, and then keep stringing query logic onto it until you get your result.That's how my current program in Access works.basically transforming, filtering and processing data until it's in a table which can be graphed easily.

I've recently been rethinking the approach I took in solving the problem in Access with dynamic SQL. Most of the code was just strung together query logic mixed in with VB. Lots of repetition and lots of intricate complexity as I had to keep layering on conditional logic to either add features, deal with mission scope changes, and deal with data driven issues as well.At the end of the day what was I doing?I was comparing the results of one row in a table to a user chosen number of other rows in the same table for a user chosen number of columns. And I was graphing the results while also doing statistical significance calculations on the differences in the results.In some ways I was seeing how far I could replicate this process with LINQ.but I see that it's a bit of a difficult proposition. What I want is both rows and columns that I specify to be my results.then I want to be able to process calculations on those results and graph them. In some ways I was seeing how far I could replicate this process with LINQ.but I see that it's a bit of a difficult proposition.

What I want is both rows and columns that I specify to be my results.then I want to be able to process calculations on those results and graph themIt is possible that you could do something in c# 4 with a custom dynamic implementation that deals with the variable name/number of columns returned using this may well be ugly as hell though so I mention it mainly for exploration rather than as a recommendation. I have no idea how dynamic will play with Linq, perhaps not at all (though I will probably try to find out soon). Just an update.I re-thought and approached the problem from a new angle entirely. I reorganized the database/table structure of my data by transposing from wide to long.basically collapsing the 120 plus wide fields into two columns (scores and counts), and then splitting different types of data into separate tables as well. Then I created associated lookups to create some table relationships.This radically changes things since I no longer have to care about selecting columns for my result datasets.instead of projection, I can now just use selection (.Where conditions instead of.Selects) to get at the fields since they are now rows instead of columns.The downside of the rethinking: the dataset size ballooned by a factor of 10. And a 600 record dataset with about 120 fields became a 150,000 record dataset with 6 fields. I split the single table up into 4 smaller tables and built some lookups.then ported it all into Access and SQL Server.

Despite the much bigger data footprint, it's still worth it in my opinion.For the moment, I am going to keep both Access and SQL Server versions of my database so I can go both the Dataset and DataContext route in my code. And a 600 record dataset with about 120 fields became a 150,000 record dataset with 6 fields. I split the single table up into 4 smaller tables and built some lookupsI can't imagine this was actually necessary. 150,000 rows really isn't all that many. Were you actually seeing performance issues prior to doing this?This was a smaller test dataset I was working with.

Datasets I am seeing with real life projects are considerably bigger. On a current project, the same equivalent dataset would be approaching a million records.Scalability is big deal. While I agree Access may well have problems with this, SQL Server shouldn't have any issues with a million record table.Agreed - it should have no issues with this. To give an example, I recently noticed basic queries had gotten pretty slow (about 30 seconds) on a particular table in one of our databases. I noticed the table had no indexes. I created some indexes and queries are near-instantaneous again. I just checked, and that table has 24 million rows (for the record, it took 7 seconds to perform the COUNT(.)).